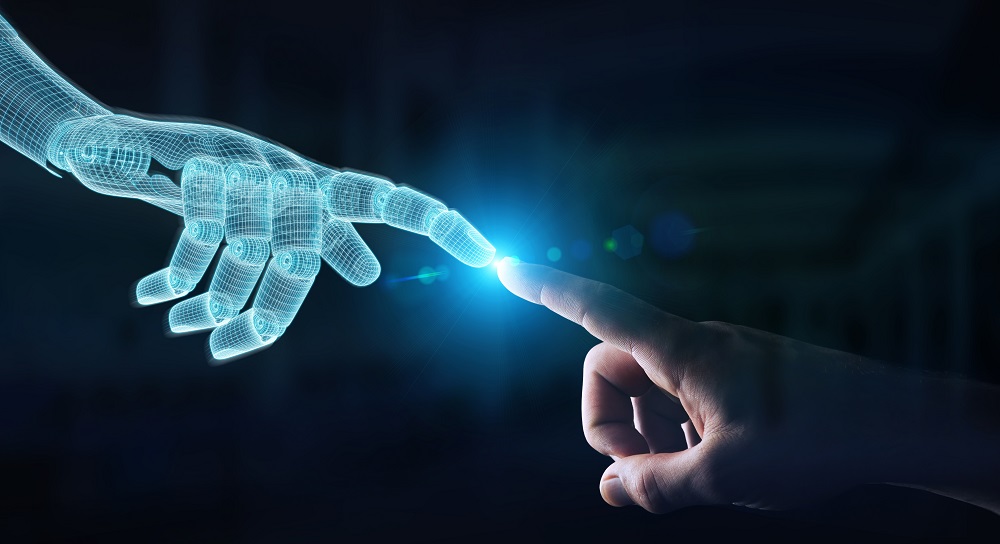

AI can identify illness, answer queries, and make business predictions. But what’s being called “emotional AI” — while not yet used widely — could be adopted sooner than you think.

If AI that can keep track of your habits isn’t invasive enough for you, imagine having a form of it that could monitor how you feel!

Why would anybody want that?

According to a guest writer for Tech Acute, the primary support for emotional AI surrounds its potential to help companies ensure immediate, highly-personal services; e.g. if someone is annoyed by wait staff failing to promptly serve them, a system could detect the upset and signal staff to send help.

Or how about your laptop’s built-in webcam? It could provide emotional response data to emotional AI systems that could help online shopping outlets determine their hit and miss rate or trigger a direct, person-to-person customer service window.

Related: Wayfair Learns Tough Lesson: Don’t Scare Away Your Customers

The idea is to respond to a customer’s frustration before it reaches a point at which it could severely threaten an engagement or purchase.

But if you’re anything like our intrepid blogger Craig MacCormack, you’re likely not a fan of such a system. And if that’s the case, you’re surely not alone.

According to that Tech Acute article, many people find this concept downright disturbing.

A 2018 Gartner survey found that 70 percent of individuals were OK with AI evaluating their vital signs or helping make transactions more secure. However, the majority did not want AI to analyze their emotions or have an always-on function to understand their needs better.

Some analysts also worry that if emotional AI gauges how people feel often enough or provides responses with simulated feelings, it could give humanity an excuse not to stay connected. For example, if an AI tool could check on a loved one and send a report that says everything’s fine, a user could decide that’s enough information and not bother confirming it’s true.

What if a person has a disability that causes them to have trouble controlling their facial expressions, or perpetually grimace because they’re in pain? Those things don’t have anything to do with the kind of service received. If emotional AI makes the wrong judgment, it could bring unwanted attention to the individual and cause embarrassment.

Integrators are often tasked with creating experiences for end users that can be powerful enough to spark emotions. But should they make it their duty to help their clients track emotional responses with emotional AI systems if they ever do garner widespread usage?